More and more companies today are exploring ways to integrate artificial intelligence into their products — and with good reason. AI offers powerful advantages, including personalization, automation, and increased efficiency, all of which can give businesses a significant competitive edge. But as the potential of AI grows, so does the responsibility that comes with it. If you’re planning to move in this direction, it’s essential to address ethical considerations from the very start.

In this article, we outline the key points to think through before development begins — how to minimize risks, why ethical AI is a strategic business decision, and which principles can help you build a solution that’s both technologically robust and socially responsible.

AI Risks: What Can Go Wrong If You Ignore Ethics

When adopting new technologies, it’s essential to consider not only the opportunities but also the limitations. Ethical AI is about recognizing potential risks from the start — and building safeguards against them. This is especially crucial when integrating AI into a product that interacts directly with users.

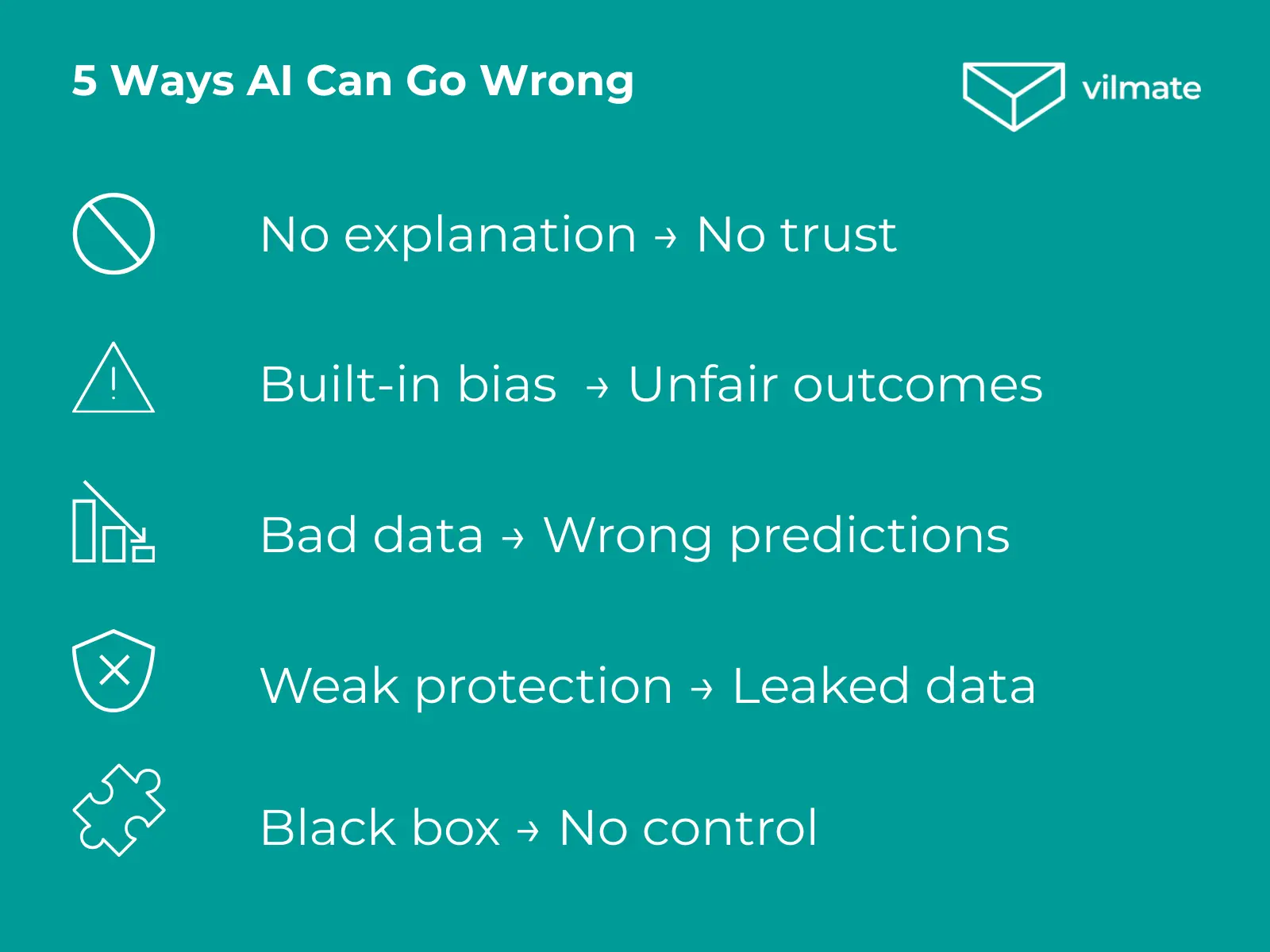

Here are some of the most common challenges companies face:

- Opaque decision-making. Why did the algorithm approve one request and reject another? If the model doesn’t provide a clear rationale, it undermines trust. For example, a bank might deny a loan without explanation, leaving the customer with no way to appeal the decision.

- Hidden bias. If the training data contains existing biases — based on gender, age, or geography — the AI will likely reproduce them. There have been cases where AI-powered resume screeners consistently rated female candidates lower for technical roles, simply because the model was trained on historical data dominated by male hires.

- Data errors. Outdated or incomplete data can cause the system to behave unpredictably, resulting in poor predictions or irrelevant results. For instance, an e-commerce recommendation engine might become “stuck” on a user’s old preferences, hurting sales by displaying the wrong products.

- Security vulnerabilities. The more advanced and intelligent a system is, the higher the stakes for data protection and processing. A single breach could compromise not only sensitive user data, but also your company’s reputation. There have been incidents where confidential customer queries were exposed through AI interfaces due to a lack of basic safeguards.

- The “black box” problem. Without insight into how a model works, it’s challenging to manage outputs, tailor logic to business needs, or scale the solution. This is especially risky in B2B contexts, where clients may require complete transparency at every step of the system’s decision-making process.

Ethical AI helps establish clear boundaries, ensuring your system operates fairly, transparently, and securely. It’s not just about avoiding mistakes — it’s about building a resilient product that users can genuinely trust.

How Ethical AI Differs from Just AI

Many companies turn to AI to accelerate processes, conserve resources, or improve their products. But there’s a significant difference between AI that “works” and AI that’s ethical. The distinction lies less in the technology itself and more in the approach behind it.

Standard AI may be trained on historical data, make decisions automatically, and operate on a “set it and forget it” basis. It doesn’t always account for the consequences of its actions, fails to explain its reasoning, and can reproduce harmful patterns — from bias to hidden risks.

Ethical AI, by contrast, is guided by a responsible and forward-looking approach. It is:

- Thoughtfully designed with a clear understanding of its impact on users and the business;

- Trained on well-vetted, diverse, and secure datasets to avoid bias and distortion;

- Equipped with tools for human oversight, transparency, and ongoing adjustments;

- Regularly audited — both from a technical standpoint and with ethical considerations in mind.

As a result, ethical AI offers clear long-term benefits:

- It operates fairly and transparently, not just efficiently;

- Anticipates and reduces risks before they become real problems;

- Earns greater trust from users, partners, and regulators alike;

- Scales more easily and stays compliant as legal and social expectations evolve.

For businesses, it’s not about being faster or more cutting-edge — it’s about being sustainable. Ethical AI helps create products that can withstand not just technical demands, but also the scrutiny of the public, investors, and regulators.

Navigating Ethical AI Regulations

Ethical considerations in AI are no longer just a matter of internal policy — concrete laws and standards are emerging, and companies will need to comply.

- AI Act (EU). The first comprehensive law on artificial intelligence. It classifies AI systems by risk level and introduces strict requirements for transparency, data quality, human oversight, and documentation. It will be implemented gradually starting in 2025.

- GDPR. Already mandates algorithmic explainability and the protection of user rights in automated decision-making.

- ISO/IEC 42001. An international standard for AI management, providing guidelines on safety, resilience, and ethical practices. It is increasingly adopted by companies operating in mature markets.

- UNESCO. Since 2021, over 190 countries have endorsed the global Recommendation on the Ethics of Artificial Intelligence, which promotes fairness, explainability, human rights, and sustainable development.

Even if you’re not operating in the EU, these frameworks are shaping the global direction of AI governance. Aligning with them means preparing your product for international markets and reducing regulatory and reputational risks from the outset.

How to Build Ethical AI

If you’re seriously considering integrating AI into your product, ethics shouldn’t be an afterthought — it should be a starting point. Ethical thinking doesn’t complicate the process; it makes your system more resilient from the ground up.

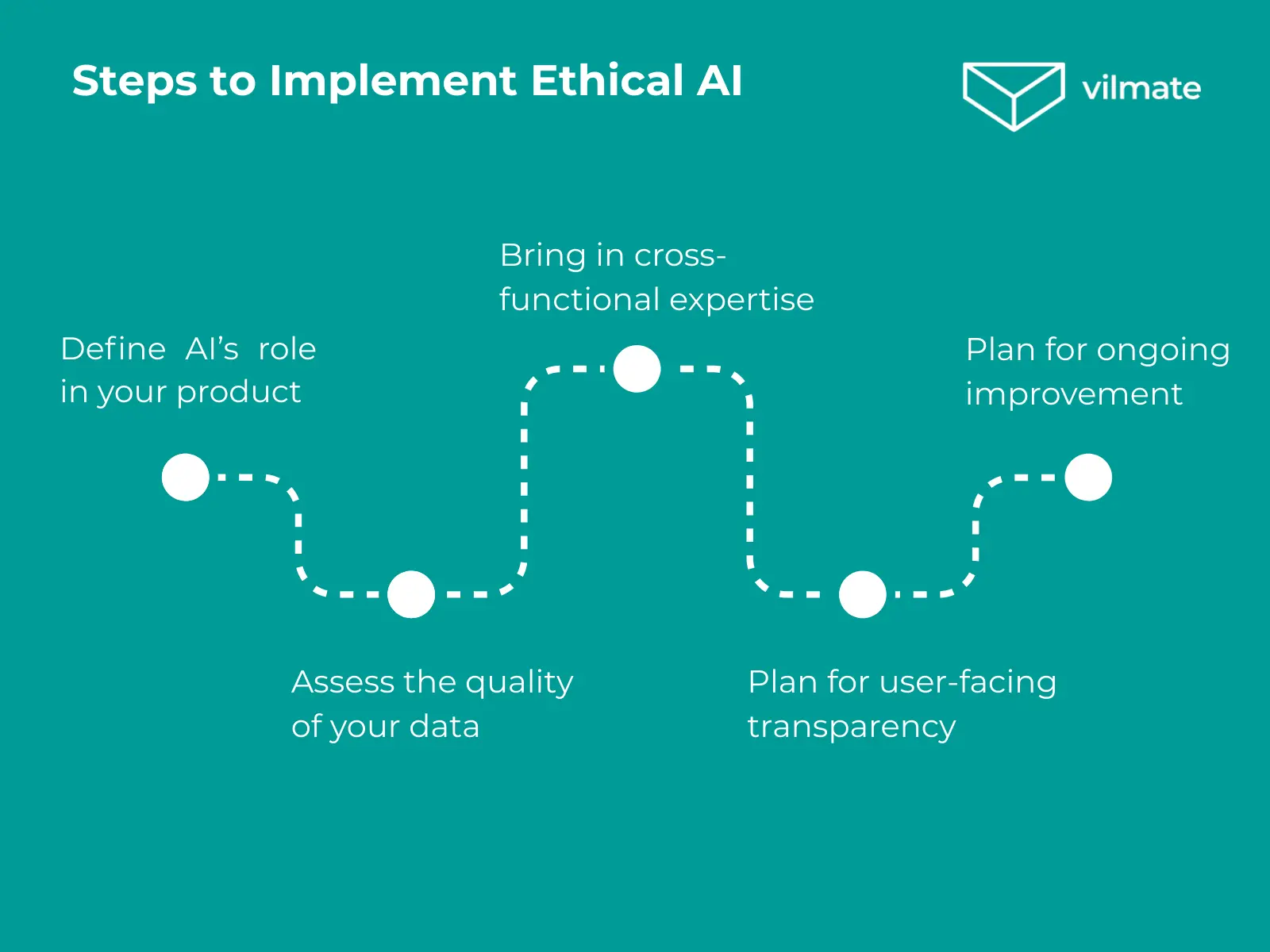

Where to begin with ethical AI implementation?

1. Define AI’s role in your product

Clearly outline what tasks the AI will handle, what data it will use, and where human oversight is necessary. This establishes transparent boundaries from the outset.

2. Assess the quality of your data

Data is the foundation of any model. Ensure it’s up-to-date, representative, and free from biases that could distort the outcomes.

3. Bring in cross-functional expertise

Involve specialists from development, UX, legal, and marketing. Each brings a unique perspective on potential risks, and collaboration early on helps identify vulnerabilities before launch.

4. Plan for user-facing transparency

Even if the model’s logic is complex, users should understand that AI is involved in decision-making — and know how they can interact with or challenge it.

5. Plan for ongoing improvement

AI is not a static system. Regular audits, testing, and user feedback help refine the model over time, ensuring it functions as intended.

An ethical approach not only helps avoid reputational risks — it also makes it easier to adapt to shifting market expectations and legal requirements. Ethical AI isn’t a roadblock — it’s a guarantee that your system will work in a way that serves both your business and your users.

Conclusion

Ethical AI isn’t just another item on a checklist. It’s a way to build a mature, reliable, and future-ready product.

If you’re planning to integrate AI and want to take a thoughtful approach, we’re here to help. At Vilmate, we combine technical expertise with a strong focus on business goals and responsibility.

Interested in an ethical AI journey? Let’s connect.